YuanLab AI Releases Yuan 3.0 Ultra: A Flagship Multimodal...

How can a trillion-parameter Large Language Model achieve state-of-the-art enterprise performance while simultaneously cutting its total ...

What’s Happening

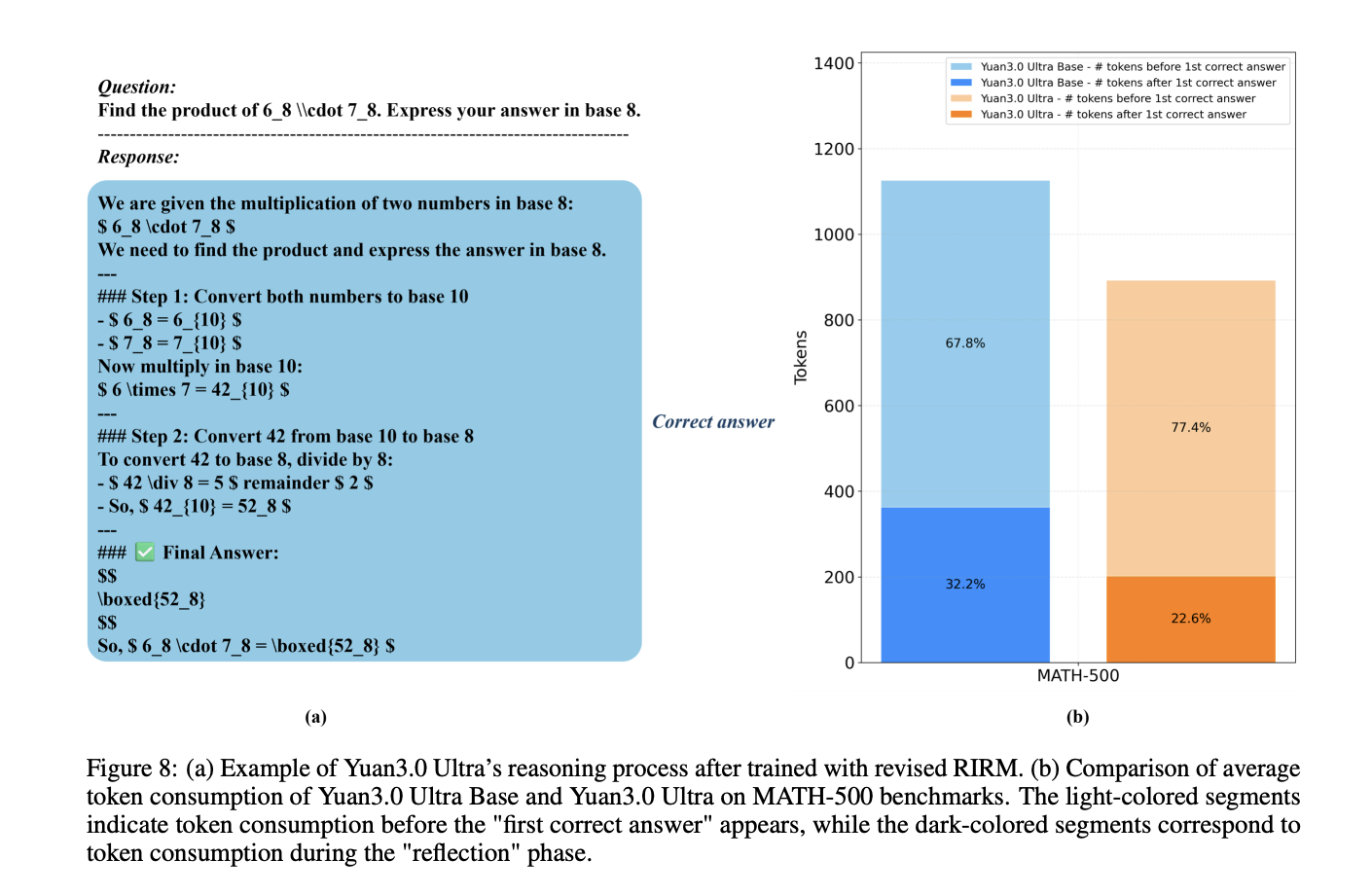

Let’s talk about How can a trillion-parameter Large Language Model achieve state-of-the-art enterprise performance while simultaneously cutting its total parameter count by 33.

3% and boosting pre-training efficiency by 49%? 0 Ultra, an open-source Mixture-of-the experts (MoE) large language model featuring 1T total parameters and 68. (let that sink in)

The model architecture is designed to optimize performance [] The post YuanLab AI Releases Yuan 3.

Why This Matters

0 Ultra: A Flagship Multimoda How can a trillion-parameter Large Language Model achieve state-of-the-art enterprise performance while simultaneously cutting its total parameter count by 33.

As AI capabilities expand, we’re seeing more announcements like this reshape the industry.

The Bottom Line

This story is still developing, and we’ll keep you updated as more info drops.

Is this a W or an L? You decide.

Daily briefing

Get the next useful briefing

If this story was worth your time, the next one should be too. Get the daily briefing in one clean email.

Reader reaction