Sakana AI Introduces Doc-to-LoRA and Text-to-LoRA: Hypern...

Customizing Large Language Models (LLMs) rn presents a significant engineering trade-off between the flexibility of In-Context Learning (...

What’s Happening

Okay so Customizing Large Language Models (LLMs) rn presents a significant engineering trade-off between the flexibility of In-Context Learning (ICL) and the efficiency of Context Distillation (CD) or Supervised Fine-Tuning (SFT).

Tokyo-based Sakana AI has proposed a new approach to bypass these constraints through cost amortization. (wild, right?)

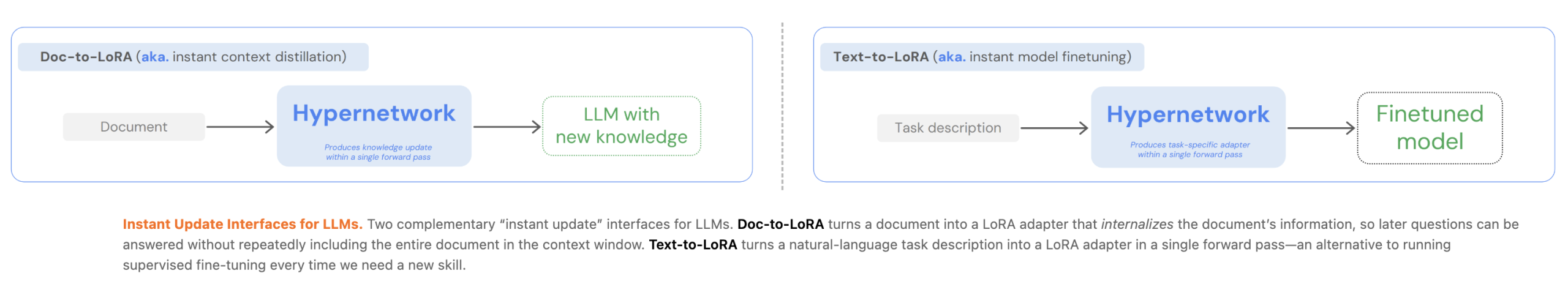

In two of their recent papers, they introduced Text-to-LoRA (T2L) and [] The post Sakana AI Introduces Doc-to-LoRA and Text-to-LoRA: Hypernetworks that Instan Customizing Large Language Models (LLMs) rn presents a significant engineering trade-off between the flexibility of In-Context Learning (ICL) and the efficiency of Context Distillation (CD) or Supervised Fine-Tuning (SFT).

Why This Matters

This adds to the ongoing AI race that’s captivating the tech world.

As AI capabilities expand, we’re seeing more announcements like this reshape the industry.

The Bottom Line

This story is still developing, and we’ll keep you updated as more info drops.

What do you think about all this?

Daily briefing

Get the next useful briefing

If this story was worth your time, the next one should be too. Get the daily briefing in one clean email.

Reader reaction