Google Rethinks AI Memory: Meet Titans & MIRAS

Google Research unveils Titans and MIRAS, a notable approach to give AI models usable long-term memory, moving beyond Transformers.

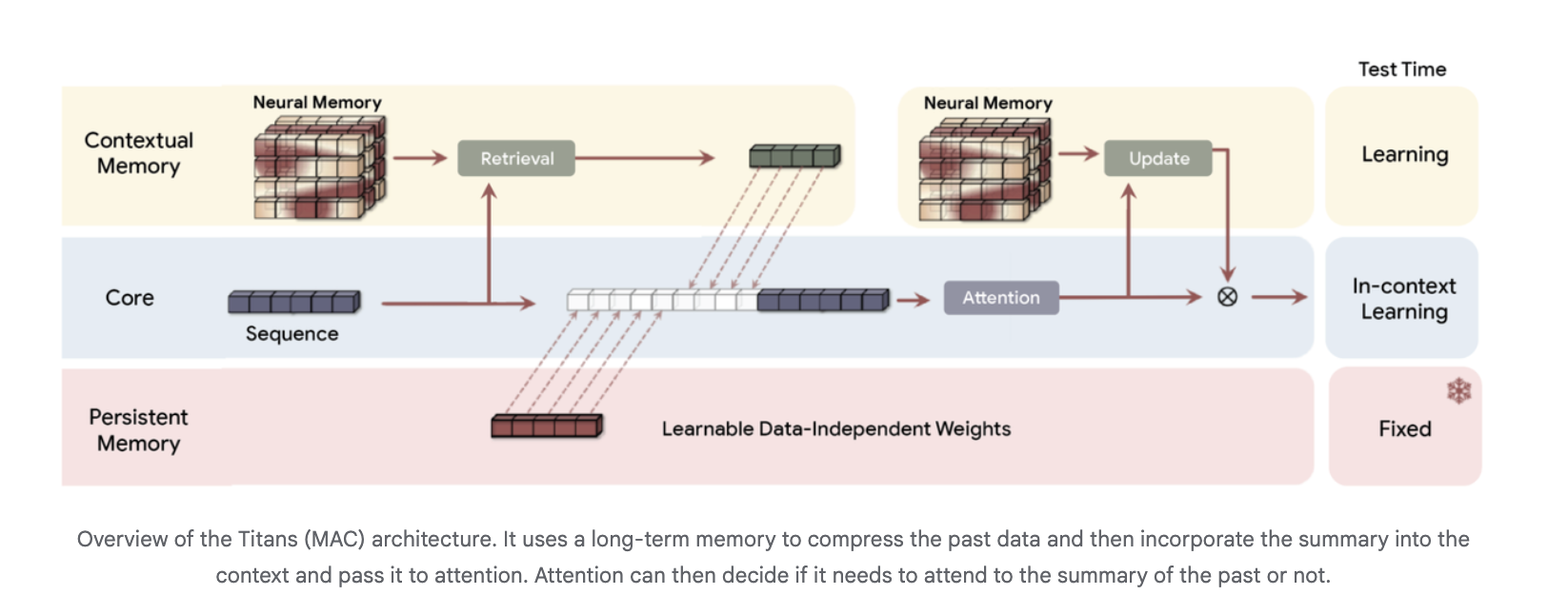

What’s Happening Google Research is making waves by proposing a new direction for sequence models, aiming to solve the long-standing challenge of long-term memory. They’re introducing two key concepts: Titans and MIRAS, designed to move beyond the current limitations of Transformers. Titans is a specific architecture that integrates a deep neural memory directly into a Transformer-style backbone. Complementing this, MIRAS is presented as a general framework, offering a broader view on how to approach long context modeling. ## Why This Matters Current Transformer models often struggle with truly long-term context, frequently forgetting details from earlier parts of a sequence. This new approach directly addresses that fundamental limitation, promising more coherent and context-aware AI. By enabling usable long-term memory, Titans and MIRAS also aim to maintain efficient operations. Their design keeps training processes parallel and inference speeds close to linear, which is crucial for scaling up advanced AI systems. This breakthrough could have several significant impacts:

- AI models could engage in much longer, more consistent conversations.

- It could unlock new possibilities for complex reasoning tasks requiring extensive context.

- Developers might build more strong and reliable AI applications across various domains. ## The Bottom Line Ultimately, Google’s Titans and MIRAS represent a bold step in the evolution of AI, pushing past the current Transformer paradigm to build models with genuine long-term recall. Could this be the architectural shift that defines the next generation of intelligent systems?

Daily briefing

Get the next useful briefing

If this story was worth your time, the next one should be too. Get the daily briefing in one clean email.

Reader reaction