Cohere Releases Tiny Aya: A 3B-Parameter Small Language M...

Cohere AI Labs has dropped Tiny Aya, a family of small language models (SLMs) that redefines multilingual performance.

What’s Happening

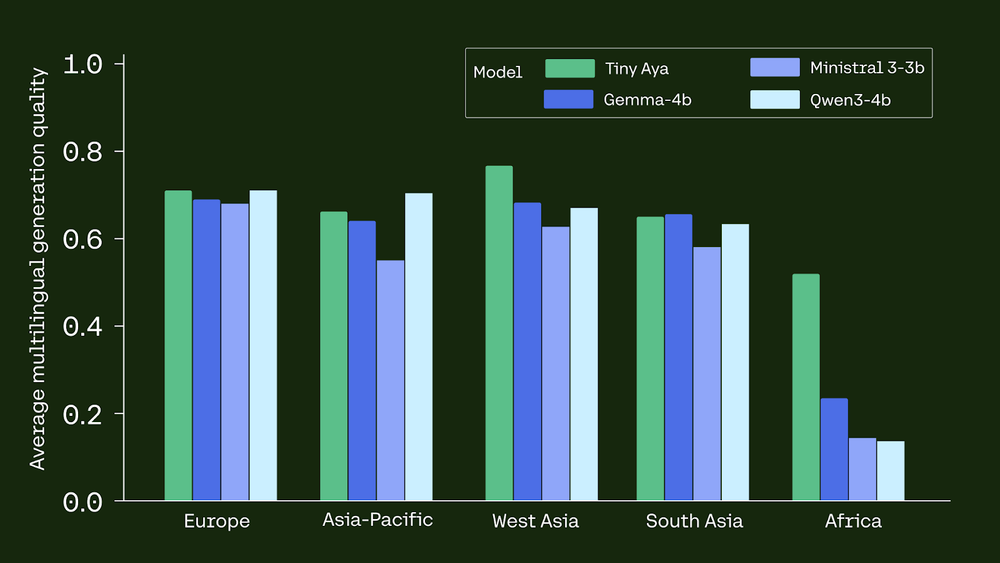

Not gonna lie, Cohere AI Labs has dropped Tiny Aya, a family of small language models (SLMs) that redefines multilingual performance.

While many models grow by increasing parameters, Tiny Aya uses a 3. (it feels like chaos)

35B-parameter architecture to deliver state-of-the-art translation and generation across 70 languages.

Why This Matters

This adds to the ongoing AI race that’s captivating the tech world.

The AI space continues to evolve at a wild pace, with developments like this becoming more common.

The Bottom Line

This story is still developing, and we’ll keep you updated as more info drops.

Thoughts? Drop them below.

Daily briefing

Get the next useful briefing

If this story was worth your time, the next one should be too. Get the daily briefing in one clean email.

Reader reaction